Big Data

Data empowerment for smarter business and competitiveness

In an age of unparalleled data proliferation, leading enterprises understand that harnessing this data and turning it into useful information has become increasingly crucial to data-driven thinking and business decision making. “Big data” has become the lifeblood of IT innovation, helping businesses stay ahead of the curve.

What is big data?

Software engineering practices have long incorporated the collection, storage, and analysis of large amounts of raw data. The key differentiator of “big data” as a unique practice is its scale. The three V’s that characterize big data are volume, velocity, and variety, all of which can be an order of magnitude beyond what we’ve been used to in the past.

In this age of mobile computing and social media, data is generated through a variety of channels, some directly through web forms, banking transactions and so on, and some indirectly through Internet searches or cookies. As well as structured or unstructured text, it can also take the form of audio, images, and video.

“Big data” encompasses the technologies, frameworks and processes required to turn large volumes of data into usable information to help businesses remain competitive, create new products and services, generate additional revenue, and improve customer loyalty.

Benefits of a big data solution

Big data can play a key role for companies in all business sectors. The more current and accurate your information is, the more potential you have to grow as a result of smart business strategies and intelligent decision-making, resulting in new opportunities. Data analytics can provide insights into current market conditions and trends, as well as customer needs, behaviours, purchasing patterns, and satisfaction scores. With this information, you can:

Optimize business flows and respond quickly to changing market conditions.

Improve what you offer and find new ways to fill the gaps.

Develop personalized buying options tailored to your individual needs.

Big data can play a key role for companies in all business sectors. The more current and accurate your information is, the more potential you have to grow as a result of smart business strategies and intelligent decision-making, resulting in new opportunities.

How Qualicom can help

Adopting big data practices is more than just implementing another technology platform. You need to consider a wide range of factors in order to ensure a successful implementation.

In our experience, the key challenges businesses face in developing a big data solution are lack of expertise in the tools and technologies, lack of understanding of how to manage the infrastructure, concerns about cost, and—especially in a cloud environment—how to ensure security.

Qualicom has the expertise to address these concerns, enabling you to focus on your core business.

We can consult with your business and IT teams to help guide you through the complexities of a big data solution. We can also follow this up with a turn-key implementation, setting you up with a system that enables you to achieve your business goals and realize a sustained competitive advantage.

Our approach

Combining big data with a cloud-based solution can deliver cost advantages, both from a data storage perspective and through the identification of more efficient ways of doing business.

Post-production

We can provide support in the areas of performance, data governance strategies, and generally ensuring that you have trust in the integrity of the information and insights being produced. We can also conduct knowledge transfer sessions to help you better understand the implementation and become self-sufficient in supporting the system.

Integrated data system development

We can assist you in identifying and accessing your internal and external data sources with a goal of developing an integrated system. We can then refine and massage the data using a multi-stage process that includes filtering, extraction, validation, cleansing, aggregation and visualization.

Business value identification

We can provide support in the areas of performance, data governance strategies, and generally ensuring that you have trust in the integrity of the information and insights being produced. We can also conduct knowledge transfer sessions to help you better understand the implementation and become self-sufficient in supporting the system.

Data modelling and enablement

Working with your client services team, we can help you to identify optimal approaches to data modelling and implement a solution providing valuable business insights.

Big Data Architecture

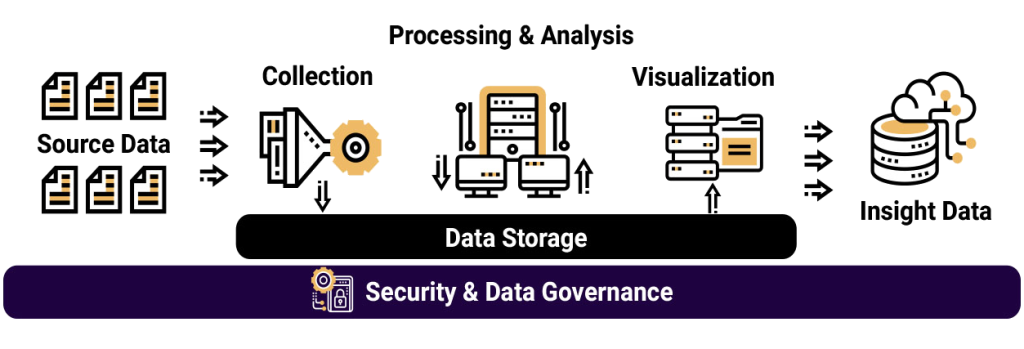

At a high level, a big data environment includes the following components and processes.

- Data is captured from various sources to feed into the data pipeline.

The captured data is rarely homogeneous. It may be structured (e.g., tabular content), semi-structured (e.g., freeform but tagged content) or unstructured (e.g., video content). The velocity of the data determines whether it is collected in batch or real time. Large volumes of slow-moving data are typically batched using an ETL program, while social media content, for example, is processed using a streaming framework. Data may also undergo transformation when loading to the data store.

Large volumes of data generally take one of two forms: a data warehouse or a data lake. A data warehouse provides better performance for structured data that is relatively easy to interpret by business users, whereas a data lake can be used for any kind of data, including unstructured.

Distributed frameworks such as Apache Hadoop and Spark are used to further process and analyze large volumes of data in the data store. The processed data is then saved back into the data store for downstream processing.

Business intelligence (BI) applications are used to develop dashboards that present data in a meaningful—and often interactive—way for data analysis by business users.

Output from the big data pipeline can be used as input to other applications; for example, developing machine learning models for predicative analysis or business models for new customer acquisition.

At every step in the big data pipeline, data must be secured, auditable, PII-compliant, and protected from unauthorized access.

Big data technologies

Distributed storage:

- Amazon S3

- Hadoop

Database management:

- Amazon DynamoDB

- Amazon Redshift

- Apache Cassandra

- Apache HBase

- Apache Hive

- Apache NiFi

- Azure CosmosDB

- MongoDB

Big data processing:

- Apache Flink

- Apache Giraph

- Apache Kafka

- Apache Storm

- Druid

- Hadoop MapReduce

- Hive

- Lambda

- Spark

Data management:

- Apache Airflow

- Apache Zookeeper

- Azkaban

- Informatica ETL

- Talend

- Zaloni

Programming languages:

- Java

- Python

- R

- Scala